Key Insights

- AI Deepfakes has become popular over the last few months, especially in 2023.

- As it is with any new and advanced technology, there are many ways AI can be misused

- Several Individuals, companies, governmental organizations and NGOs have called for a regulatory body for AI, including Elon Musk, The FBI, The UN and others.

- United Nations Secretary-General Antonio Guterres approved a proposal by some artificial intelligence entrepreneurs this week.

- This proposal will establish a worldwide AI “watchdog†organization to stop the unregulated creation, use and promotion of “dangerous” forms of AI.

Artificial intelligence (AI) has blown up quite well in 2023.

And why wouldn’t it?

AI & deepfakes has proven itself to be a powerful technology that can create amazing things, such as realistic images, videos, and texts.

At this point, almost everyone has heard of the wonderful things that new AI tools like ChatGPT and Midjourley, among others, can do.

However, the truth remains that AI and deepfakes can also be used for very bad purposes, like spreading false information, hate speech, and propaganda. To put things simply, AI can do more damage than most people realize, in the right (or wrong) hands.

These are some of the concerns raised by the United Nations (UN) in a recent report released on 12 June about information integrity on digital platforms.

In this report, The United Nations said that the risk of misinformation online has “intensified” because of “rapid advancements in technology, such as generative artificial intelligence,”.

And of these risks associated with generative AI, deepfakes can do the most damage.

How Much Damage Can Deepfakes Do?

Deepfakes are AI-generated videos that can manipulate the appearance and voice of anyone. It doesn’t matter whether they’re some plain Joe on the street, or the president of the US.

Deepfakes can be used to impersonate celebrities, politicians, or ordinary people, and make them say or do things that they never did.

Deepfakes carry a lot of risks, and fraud or impersonation is the least of them.

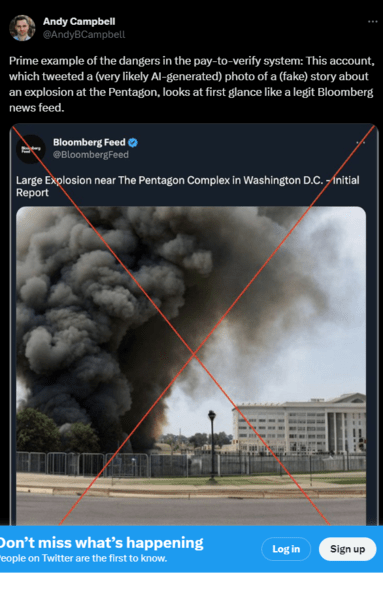

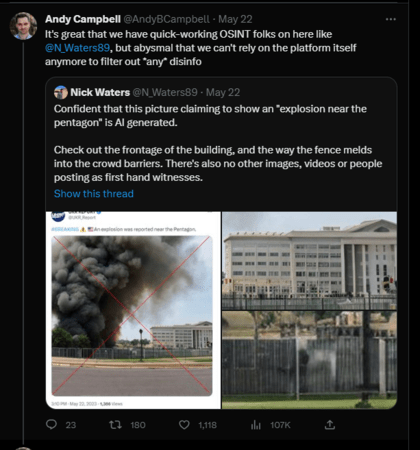

Deepfakes pose a serious and urgent threat to information integrity, especially on social media platforms, where they can spread quickly and uncontrollably.

Soon after this tweet hit the internet, the S&P 500 briefly hit fresh lows, before correcting itself.

Even The FBI Says AI Is A Threat

According to the FBI, the bureau is getting reports from victims, including small children and non-consenting adults, whose social media content is being converted into adult content by deepfake tools.

According to reports, doctored photographs and films of non-consenting adults are being widely circulated on social media and pornographic websites.

“The photos are then sent directly to the victims by malicious actors for sextortion or harassment, or until they are discovered on the internet by themselves.” Once distributed, victims may have considerable hurdles in stopping the modified information from being shared indefinitely or being removed from the internet,” the FBI PSA said in a June 5 report.

Photo modification has become a lot easier, with the use of photo-generating tools such as Adobe Photoshop, which now has generative AI capabilities, and OpenAI’s DALL-E.

Overall, AI, while having several important use cases that are slowly becoming part of our daily lives, also can be greatly abused.

The UN’s Response

Since ChatGPT began six months ago and became the fastest-growing AI app of all time, generative AI technology that can create any kind of response from text prompts has fascinated the public.

Other AI tools can do several other things like generating realistic images and building entire apps in seconds.

Concerns have also been raised about AI’s potential to generate deepfake images and other falsehoods.

On Monday, United Nations Secretary-General Antonio Guterres approved a proposal by some artificial intelligence entrepreneurs, as shown by the video attached to this tweet.

This proposal will aim to establish a worldwide AI “watchdog†organization similar to the Worldwide Atomic Energy Agency (IAEA).

The UN report also called for urgent and immediate action from all stakeholders, including governments, digital platforms, civil society, academia, and media, to ensure the responsible use of AI and address its implications for information integrity.

“Alarm bells are ringing loudly about the latest form of artificial intelligence, generative AI.” “And they are loudest from the designers,” Guterres told reporters. “We must take those warnings very seriously.”

Guterres also said that the era of Silicon Valley’s ‘move fast and break things’ philosophy must be brought to a close, and that “business as usual†is not an option when it comes to such a powerful form of technology like AI.

The UN’s report also proposed some measures, such as:

- Developing voluntary guidelines and standards for generative AI

- Enhancing transparency and accountability of AI systems

- Promoting digital literacy and critical thinking among users

- Strengthening fact-checking and verification mechanisms

- Protecting the rights and dignity of individuals affected by deepfakes.

Guterres said that it is time to establish a UN Code of Conduct for all Digital Platforms, which will be a set of principles that he hopes will be implemented voluntarily by all stakeholders.

This new Code of Conduct will be developed ahead of the “Summit of the Futureâ€: A conference to be held in late September 2024 and aiming to host inter-government discussions on various issues related to AI.

Disclaimer: Voice of Crypto aims to deliver accurate and up-to-date information, but it will not be responsible for any missing facts or inaccurate information. Cryptocurrencies are highly volatile financial assets, so research and make your own financial decisions.