Key Insights

- OpenAI’s new AI model, Sora, can generate realistic videos from text. Is this good for crypto?

- This is impressive, but still raises concerns about misuse in crypto scams.

- Sora’s can generate detailed scenes, high resolution, and character manipulation, making it powerful fuel for deepfakes.

- Scammers could use Sora to impersonate celebrities, create fake identities, and manipulate victims into investing in rug pulls.

- OpenAI may need to implement safeguards to prevent malicious use of Sora in the crypto market.

OpenAI just unveiled one of the most impressive generative AIs of 2024.

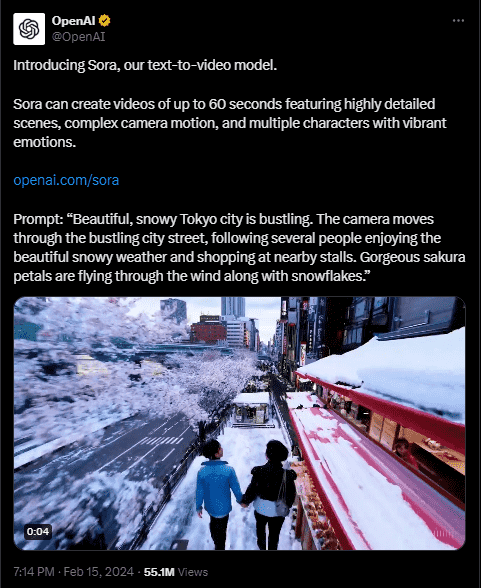

This AI model is called Sora, and it can generate realistic videos—from simple text prompts.

New waves of speculation and gasps of amazement have been flying around on X/Twitter all day, and here are some ways this new AI can be used as nightmare fuel for the crypto space.

Say Hi To Sora

OpenAI is primarily known for being the parent company of Chat GPT, which garnered the same kind of attention from the worldwide audience.

The company has now forayed into video generation technology when on February 15, it announced the birth of Sora.

Unlike other video generation tools out there, Sora can seamlessly create detailed and hyperrealistic videos from simple text prompts, extend pre-made videos, and even generate scenes based on mere images.

Sora has been touted as being able to generate HD scenes that produce resolutions of up to 1080p, with multiple characters, specific types of motion, and highly detailed objects and backgrounds.

There Are Still Some Creases To Iron Out: Crypto

While the world is no doubt impressed with what this AI model can do, Open AI has been open about some current limitations of this AI tool.

For example, Open AI in its announcement post, says that the model hasn’t quite figured out how to simulate the physics of complicated scenes yet.

This leads to some flaws in cause and effect.

Open AI cited an example, in which Sora generated a video of a person taking a bite out of a cookie, without leaving a bite mark.

Open AI also says that Sora might find it hard to interpret spatial details, and might get confused with lefts and rights.

With these limitations and other factors in mind, OpenAI currently offers highly restricted access to Sora for now, offering it only to “red teamers” to assess some other potential pitfalls.

The Dark Side Of Video Generating AIs

Sora is impressive, as shown by the thousands of videos currently circulating on Twitter/X, as we speak.

However, Sora, being a generative AI tool can be used for a wide range of purposes, like construction, entertainment, comedy/satire, education, healthcare, etc.

However, it can also be used in several other “less than ideal†ways.

In the past, video-generating AIs, not nearly as powerful as Sora have been used to create deepfakes where faces can be swapped, voices can be changed, and scenes that never happened can be generated.

Such a powerful video-generating tool can be used for crimes in crypto (and elsewhere), not limited to scams, misinformation, blackmail, as well as market manipulation.

For example, Scammers can use these video tools to create fake videos of celebrities and influencers, promoting a crypto project and asking investors to “go all inâ€.

Moreover, Scammers can also use videos like these to create fake identities or relationships with their victims.

In January, there was an issue with an employee who got invited to a business video call, where scammers took the appearance of company executives and demanded $25 million.

There have also been similar reports from Bitdefender Labs, about cybercriminals using AI to create deepfakes of known figures and celebrities, hijack YouTube channels, and steal money from victims using “Double Your Crypto” scams.

Overall, Open AI might be forced to put guardrails on Sora, in an attempt to prevent any form of misuse in crypto scams and thefts.

Disclaimer: Voice of Crypto aims to deliver accurate and up-to-date information, but it will not be responsible for any missing facts or inaccurate information. Cryptocurrencies are highly volatile financial assets, so research and make your own financial decisions.